Layers

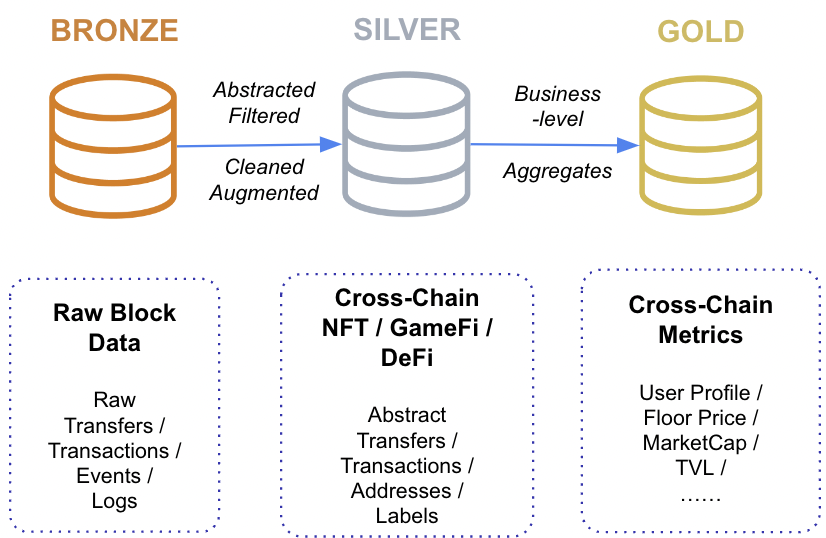

After processing and saving transactions in raw form, our ETL pipeline asynchronously implements different logic for calculating business metrics, categorizing transactions, dividing them into groups, assigning appropriate labels to them, and using other custom aggregation methods. Ultimately, relevant business metrics are stored in tables, just as raw transactions. Tables that store raw data from the blockchain are of the bronze layer; tables that store business metrics are of silver and gold layers.

Let's go through each separately for each level and describe its meaning and features:

Bronze

Raw untransformed unmodified data. Providing detailed records of all activities on the blockchain. For example, in the case of EVM networks, transactions, logs, traces, etc. will be stored here - that is, storage in the form in which the blockchain actually stores data. It is possible to query with this data, however, it is necessary to have a good understanding of the internal logic of smart contracts in order to be able to gain knowledge.

The data in tables with this type is the most when compared with others. Thus, the processing of queries implies that the aggregation of this data will take quite a long time.

Silver

The raw Bronze data is transformed into sets of data that adds additional values. This can imply replacements of codes to meaningful values, adding sanity constraints, filtering-out unneeded information, etc. The goal of those tables is to unify data standards for EVM and non-EVM; abstract data structures for different domains; create a de facto standard of business logic. Some of those tables are nft_transactions, ethereum_token_transfer, protocol_transactions, contract_info and etc.

The main benefits of those tables is that one could easily switch between EVM and non-EVM chains, different marketplace and protocols, as data is organised semantically correctly so that one without the knowledge of raw blockchain data structures could you those tables straight away.

Gold

Gold tables consist business-level data aggregation to directly answer domain specific questions. Gold tables includes many statistical metrics that is ready for analysis without any aggregations (joins and merges, decoding, etc.) within the query itself. No further calculations are needed to obtain statistics, such as, PnL and holding balance of a wallet address, therefore metrics could be taken as they are so that the development team can save time in development, run-time computation and also data validation and testing, as it all was previously validated by Footprint.

Example tables: dex_pool_liquidity_daily_stats, lending_pool_daily_stats and etc.

Updated 7 months ago